So yes, it turns out that I attended 26 sessions at #theta2015. This link is to the tag here on my blog, so in addition to all my live-blogged notes it self-referentially includes this post and any future thoughts arising (I have at least one post planned on altmetrics and oral presentations). For those daunted by the thought of that much reading (including my future self, for when asked what I got out of it), here’s a more scannable summary.

Highlighted are those titles that I particularly want to refer back to for one reason or another, which may bear only passing resemblance to those titles that will be of interest to others.

Day 1:

- Waves of the Future: Possibilities for Higher Education: throws out a bunch of exciting/terrifying trends affecting higher education and posits some provocative scenarios for the future (open wins; closed wins; automation wins; creative renaissance). Much to think about.

- Changing times, emerging generations: a snapshot of the megatrends affecting higher education: more trends, (Australian) demographics-focused. My notes were brief, just reflecting my own discomfort with this kind of lumping which can neglect vulnerable groups. To which I’d now add that I could see the value of saying “Most people are comfortable with this technology and it’s the new way of the world” if you immediately follow it up with “So how do we support people who aren’t?”

- Integrating user support for eResearch services within institutions. Lessons learned from AeRO Stage 2 User Support Project: successfully introduced a maturity model for services to provide user support as realised a completely centralised approach wasn’t workable. I’ve come across the maturity model idea before so great to hear more about it and its advantages here; it seems like something that could be useful in all sorts of contexts both in getting ourselves/other institutions up to scratch, and in supporting researchers and other staff (and students too, why not?) to upskill in all sorts of areas of expertise.

- 264 students, eight courses, 792 High Definition video streams, no walls: primarily a ‘look at our awesome technology/learning space’ presentation (re a wet lab that can accommodate 8 simultaneous classes – it is in fact awesome) but also good takeaways about the power of stakeholder engagement and prototyping in a successful project.

- Forging productive partnerships between learning, teaching, library, and IT: panel discussion about value of collaboration between these groups. Executive summary: it’s super valuable, let’s all do more of that (but also some challenges).

- Where does Campus Learning become Online Learning? Emerging trends in learning space design and usage: panel discussion on developing good learning space from various perspectives (academic and IT definitely in the mix). I noted a linkage to the value of collaboration panel above; also now note the link to maturity models implied by idea that putting slides online, while not actual online teaching, can be a starting point.

- A Real-time Step into Space: Reducing complaints about study space by providing monitored “satellite” spaces (with “shushing”) and creating an app linked to gatecount cameras to tell students where they can find free spaces. This spawned a brief Twitter discussion in which @GraemeO28 asked if there was an app to shush students and I suggested (accidentally under the wrong Twitter account) a shushing librarian avatar on a wall screen activated by decibel levels.

- Video-conferencing and teaching – From outback Queensland to Ireland and back again: looked at student engagement with lectures using video-conferencing a) class from one campus to another and b) video-conferencing to enable lectures by industry experts. Some good discussion about challenges and benefits (especially with the industry engagement).

- Connecting data to actions for improved learning: Scan of much-increased sources of data that can be used for learning analytics to predict and head-off failure/drop-outs. Idea of letting students track own data along with health, cf Fitbit-style wearables. (I’d point out that health-monitoring wearables have fallen prey to unconscious bias: male designers fail to include monitoring of periods; white designers accidentally make the pulse detection fail with dark skin. So we’d need to be careful of things like this.) In questions the ‘creepy’ factor was also discussed.

- Innovations in publishing; giving control back to authors: I didn’t write down much detail of this good overview of the trend to open in publishing. Being familiar with that, for me the interesting part is the question raised by the conclusion about how we need to shift the power from the publishers (who still have it, even under open access) to the authors. The question being: how do we do this? given that it requires authors to have knowledge, do they even want it? Sometimes with great power comes great mental fatigue…

Day 2

- Learning Sciences & the Impact on Learning Technologies and Learning Activities: This turned out to be the session I’d come for: a great introduction to how we know a whole lot about learning and we should be designing learning tools around good learning practices. People aren’t good at estimating their own competence – but increasingly there are adaptive learning solutions out there that can.

- How will digital humanities in the future use cultural data?: primarily an overview of how digital humanities scholars use data now. Suggests talking to researchers about what materials they need, investigate APIs, and provide training.

- B(uild)YO skilled Data Librarian: flipped classroom so I was too busy participating to take notes

- ‘Let’s be brief(ed)’: Library design, education pedagogy and service delivery: participatory design and built pedagogy in redesigning library space for an architecture library. The library as reference material for architecture students, as well as including varied learning/study spaces.

- Evolving customer engagement: Using mobile technology and gamification to improve awareness of and access to library services: used Blogger and Google Forms to make their regular library orientation tour more self-directed and fun. So evolutionary rather than revolutionary. Appreciated that they mentioned the (significant) time it took to do the work at various stages, also the demo with a custom-designed ‘game’ for the session.

- Towards a New Library of Resources for Higher Education Learning and Teaching: presentation focused on the choice of a vocabulary (to improve search effectiveness) and work involved in mapping terms.

- Curtin Library Rocking the (meta)data: a nice point about the line between data and metadata not being clear. Mostly about a specific digitisation project; interesting take away that this was seen as the best way for librarians to develop data skills ‘on the job’, and that they would need to learn new skills for each new project. So then does that mean we shouldn’t worry about generic upskilling, but just jump in? It certainly implies that we shouldn’t assume learning curve (and the time/training/money needed for that) will be less on second or subsequent projects.

- Elements Integration – lets chat about Research Repository and populating Researcher Profiles: unfortunately garnered far more prospective Elements users than current ones, which unbalanced the desired discussion and probably didn’t turn out to be very helpful for anyone.

- KISS Goodbye to roadblocks in scholarly infrastructure: a bit about open access, but particularly interesting discussion on the need for persistent identifiers especially in the context of the proliferation of standards. ORCID’s tried to avoid pitfalls but early days…

- Reimaging the University Helpdesk for the Next Generation of Digital Research Skills: introduced various support services including software/data carpentry workshops, research tool ‘speed dating’, hacky hour at the bar, Research Bazaar. Idea that everyone works in different ways so need different methods. This is resource intensive so I especially liked the idea of essentially matchmaking researchers who know a tool with those who want to learn it to develop a sustainable research community. In later discussion @kairos001 pointed out this is also hard to sustain in a small environment where there isn’t a critical mass of researchers knowing any basic tools. So maybe we need to collaborate with other local unis/CRIs, or even facilitate bringing in external experts.

Day 3:

- From Information to Meta Knowledge: Embracing the Digitally and Computable Open Knowledge Future: state of the nation of research libraries in China which are rapidly changing to support research. Culminated in a shocking mention that these libraries are currently hiring more STEM grads than library grads – seen as easier to teach STEM grads library skills than to teach library grads the needed STEM skills. This was clarified as a temporary situation – ideally want to get library schools to restructure somehow to support needed skills. Still felt to me like the focus on STEM might be at the expense of other important aspects of librarianship and even of research viewed more broadly.

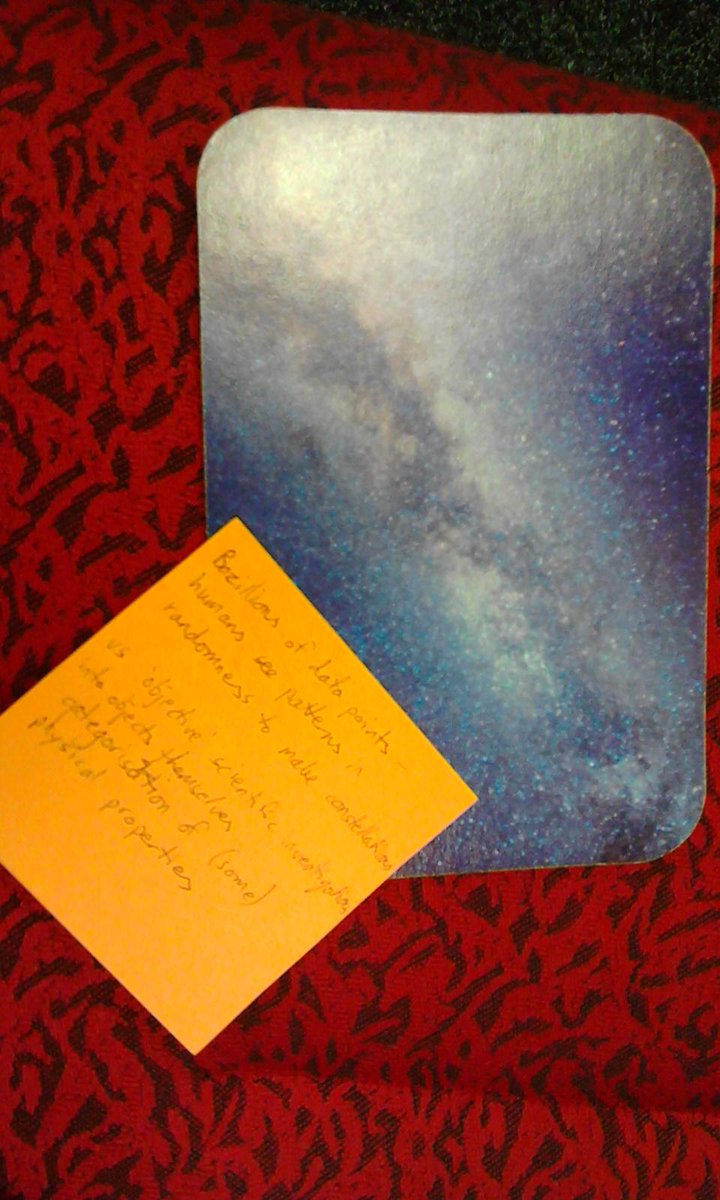

- Creating Connections in Complexity: discussions at the intersection of big data & learning: flipped presentation but I got a few notes down on the question of where is the ‘human’ in analytics. Provided me with thinky thoughts about not losing the individual in the pattern, and about not devaluing creativity in favour of empirical/quantifiable analyses.

- Design Develop Implement – A team-based approach to learning design: helped people wanting to design new learning objects / programs through a short program of workshops and consultations. I didn’t get a lot out of this session but there may be more of use from their website.

- Copyright and compliance when the law can’t keep up: Issues with innovation in online classrooms: a good discussion of navigating a middle way between hyper-compliance and total disregard of copyright law, by focusing on managing risk. Gave a useful checklist of things to think about when making decisions, with examples.

- Flexible, Secure and Sharable Storage for Researchers: overview of the storage solution they developed for research data and some of the features they built in. Developed for working data – not intended to provide storage for published data – but some thought put into archival (primarily taking the “long-term storage space is cheap, let’s keep everything” approach and figuring they’ll deal with the long-term costs of this if it gets popular enough).

- Better connected education – The future classroom & campus: high-level overview of trends in ICT as affecting higher ed. Not in a style I was able to easily note-take so hopefully there’ll be slides online.

And finally:

My #theta2015 lego mini-me has found a book, a 'shh'-mug, and some more minifig friends. #thetaselfie #2ndattempt pic.twitter.com/LV9DC6B1v6

— Deborah Fitchett (@deborahfitchett) May 15, 2015